At the F8 conference, Facebook showed off its ability to recognize objects so they can be manipulated in a virtual world.

Many of us spend a lot of time looking at screens when we’re out in the world, but the ultimate goal of augmented reality is to have us looking through them. Today, at Facebook’s F8 conference keynote, Mark Zuckerberg outlined a plan to make AR a major facet of the company’s path toward total ownership of your eyeballs. The Camera Effects Platform is built around the imaging devices already built into smartphones and tablets, which Zuckerberg wants to further cement as the “first mainstream augmented reality platform.”

All the Facebook apps now include camera integration in hopes of converting the smartphone into a semi-transparent, Facebook-powered, head-up display that filters the world, providing information and distractions alike along the way. The first two pieces of Facebook's AR puzzle include the Frame Studio for creating interactive wrappers that can surround images and videos in the Newsfeed, and AR studio for developing more complex functionality.

The Camera Effects Platform is going into closed beta today and Zuckerberg readily admits that this system of using smartphones to view an augmented world is a step toward the inevitable head-mounted device (he specifically called them “glasses,”). Of course, head-mounted AR isn't new, but it also hasn't exactly caught on with typical users.

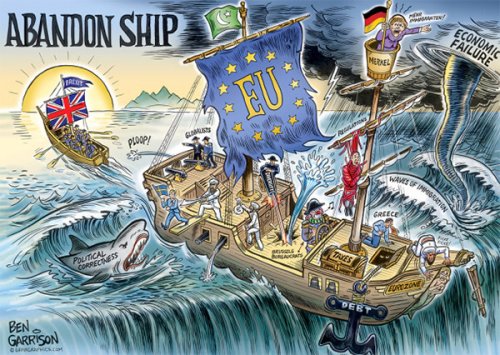

The system is grounded in Simultaneous Localization and Mapping (SLAM) technology, which gives it the ability to determine spatial relationships between objects in a scene. It’s already a familiar term for those in the AR, VR, and robotics industries. The company claims to have made some extremely big leaps in its object-recognition technology in the past few years, which allows the software to recognize things in the frame and manipulate them for the viewer’s experience. It can recognize people, but it can also recognize individual parts of the people and their poses. Judging from the demonstrations, it’s also extremely good at recognizing a cup of coffee.

Facebook's object recognition technology is a big part of its ability to push augmented reality.

Location data will also play a big part in helping to recognize where a user is and how they might be able to interact with the environment. So, if you’re looking at a menu in a restaurant, you may also see augmented reality reviews and menu suggestions from friends who have dined there in the past.

Some of the other demos given during the keynote look similarly impressive. A tower defense game, for instance, is played by multiple people slapping AR bad guys off of a real table in the physical world. Another demo showed an image of a real room filled with virtual objects like water and bouncey balls, which react to objects in the room. You can also see other Facebook tech baked into the demos, like the ability to shop for a product you see by simply clicking on it. This kind of AR tech is also a big talking point for Samsung and its new virtual assistant Bixby, which is built into the Galaxy S8 smartphone.

That underlying theme of commerce is pervasive, which shouldn't come as a surprise. AR effectively shrinks the gap between a user seeing a desirable item and being able to purchase it. There are also big implications in terms of Facebook's multi-billion dollars advertising business. Personalized data that can be used to target advertising is very valuable at the moment, so concerns about an increasing number of products invading our augmented realities aren't unfounded.

For now, the Camera Effects Platform is grounded in more familiar uses, like adding reactive filters and backgrounds to our selfies, which brings us to the matter of Snapchat.

Earlier today, Snapchat released an update that takes its AR experience from the smartphone’s front-facing camera—or the selfie camera, if you will—and projects it into the real world through the rear-facing camera. Now you can plop a smiling rainbow out into the world using Snapchat’s popular filtering technology.

Snapchat’s new features really are impressive, but Facebook is offering a much broader view of what it’s hoping to accomplish, by acting as a digital filter to the real world for both consumers and creators.

In order to foster support for its AR offerings, Facebook also announced an AR studio meant to enable content creators to make digital objects without the need for coding knowledge. The demo included the creation of a space helmet for a video game that certainly wouldn’t look out of place as one of the many Snapchat facial filters.

For the moment, AR will co-exist with virtual reality, especially considering Facebook’s massive investment in Oculus. The company announced a new initiative called Spaces, which is built for creating virtual reality parties.

This is the first time some of this object recognition technology is going to be made available to developers outside Facebook proper.

Without having tried it, the whole thing evokes Second Life, a mostly-dead platform that tried to create a virtual reality in a world before headsets and consumer-grade 360-degree cameras. In a way, Spaces almost feels like the inverse of the AR camera platform, providing glimpses of the real world through virtual screens and shared photos in a cartoony world populated by avatars.

One of the big takeaways from the keynote, however, is that Facebook is looking at this as a first step down the road toward immersion. Speakers frequently mention that this shift to augmented and virtual reality is “one percent” done. Obviously, it seems that the goal is for these technologies to continue to converge. The ideal balance between real world and virtual existence seems fluid for the moment, but it’s clear that Facebook wants to be a large part of wherever we land.

Our editors found this article on this site using Google and regenerated it for our readers.